Multiple-Choice Questions

Multiple-choice questions: they just might be (with apologies to chemists) the "universal solvent" of assessments for their ubiquitous appearance in tests across a variety of disciplines. Despite their common use, multiple-choice questions (MCQ) are often maligned. Criticisms, for student learning, include that: MCQs test recognition rather than recall (Little, Bjork, Bjork & Angello, 2012), poorly written MCQs can frustrate students’ accurate interpretation of a question (Burton, Sudweeks, Merrill & Wood, 1991) and tests students’ ability to memorize material rather than assessing higher-order thinking (DiBattista, 2008).

There are certain contexts where multiple-choice tests are not suitable for students to demonstrate the achievement of learning outcomes (e.g. outcomes where students need to document their process, organize thoughts, perform a task, or provide examples to demonstrate their learning). However, in other instructional contexts, such as large classes, the MCQ test is often seen as the most pragmatic choice due to the logistical limitations of other test types. The goal, then, is to develop the best multiple choice test possible for these situations. But how can MCQs and, in turn, multiple-choice tests, be constructed in such a way to addresses the often-cited limitations of the assessment?

Writing “better” multiple-choice questions

There certainly are benefits (beyond the simple question of logistics) for selecting MCQs to assess student learning. As DiBattisa (2008, p. 119) describes, for tests of a comparative length, “well-chosen multiple-choice questions can provide a broader coverage of course content than [short-answer or essay-type] questions”. An additional benefit is the (generally) higher statistical reliability of multiple-choice questions when compared to short-answer or essay-type questions. It is worth mentioning that, in this context, higher reliability means that if the same student wrote two tests designed to measure their understanding of the same material, their scores would be more comparable.

So, what guidelines can instructors follow to emphasize the benefits of MCQs for student learning, while addressing the limitations of MCQs?

1. Give yourself an appropriate amount of time to construct the MCQs

The scholarly literature on writing MCQs is clear: writing well-written MCQs is a difficult and time-consuming process (Collins, 2006). Some literature suggests limiting yourself to creating no more than three to four questions in one day. One strategy instructors take to ensure they set aside enough time for authoring MCQs is writing one to two questions after each class.

When creating questions, it is also worth making note of the answer’s source. A direct reference to the source material will help save you time, in case you need to revise the question at a later date, or want to address student questions.

2. Link MCQs to test specific learning outcomes

Each MCQ should be constructed to test a single course concept or outcome. If you follow the suggestion in guideline #1 of spreading the construction of MCQs over time, consider writing one or two MCQs that are linked directly to a lecture’s or seminar’s learning outcomes for that day.

To ensure that you are assessing your course learning outcomes at varying levels of cognitive complexity, you could create a test blueprint similar to the one linked here.

3. Consider the sophistication of student learning that the MCQ is testing

Here, Anderson & Krathwol’s (2001) expanded taxonomy of learning can help to ensure that MCQs are aligned to the complexity of knowledge expected. This taxonomy, a revised version of Bloom’s taxonomy, includes six levels describing increasinging cognitive complexity, listed below from least-complex to most-complex:

- remember

- understand

- apply

- analyse

- evaluate

- create.

In the case of MCQs, avoid writing a question which tests a student’s recall (Anderson & Krathwol’s remember category) if what you expect is that students in the course should be able to make judgements about the material (Anderson & Krathwol’s evaluate category). The key here is the alignment between the complexity of learning that students are expected to demonstrate, and the MCQ.

One approach to consider is evaluating multiple-choice questions using the Blooming Biology Tool (Crowe, 2008), or a disciplinary-appropriate adaptation of the tool, and ranking MCQs based on the complexity of learning being tested. You would want to see a tight alignment in complexity between the outcomes expected of learners in the course, and the MCQs used to assess that.

4. Write a clear, complete stem

As summarized in the video above, the question stem needs to be a complete statement, linked to a single specific concept or problem, written so that only one of the provided alternatives is correct. Avoid repeating words in each of the alternatives; rather, place that language in the stem (Burton et al., 1991). Structure the stem so that you’re asking for a correct answer—negatives and double negatives are more difficult for the student to understand (Collins, 2006), which means you’re testing a students’ reading ability and not their understanding of the concept being tested.

5. All alternatives should be plausible

Properly-constructed multiple-choice tests have been shown not only to assess student learning, but act as learning events in and of themselves (Little et al., 2012). A key condition of whether the multiple-choice test improves student performance in future tests is the plausibility of all alternatives—that is, the alternatives should be seen as possible answers.

Distractors are the alternatives that result in an incorrect answer. If your aim is to write MCQs that assess student learning, “implausible, trivial or nonsensical” (Collins, 2006, p. 548) distractors should not be used, even if they may be amusing for test-takers. Collins (2006, p. 548) goes on to note that “The best distractors are (a) statements that are accurate but do not fully meet the requirements of the problem and (b) [are] incorrect statements that seem right to the examinee. Each incorrect option should be plausible but clearly incorrect.” Distractors should all be created or linked to one another, and should fall into the same conceptual category as the answer.

6. Aim to write between three and four alternatives

A meta-analysis of assessment research (Rodriguez, 2005) has shown that in well-written MCQs, with equally plausible alternatives, three alternatives are enough to test student understanding: two distractors and one correct answer. Consider that if you construct more questions with just three alternatives, you can likely increase the number of questions in a multiple-choice test, thus improving the coverage of concepts being tested. Writing MCQs with five or more alternatives offers no real benefits to assessing student’s understanding.

Writing multiple choice questions to assess higher-order thinking

MCQs do not have to be limited to only assessing recall. Students’ higher-order thinking, such as application, analysis, and synthesis, can be tested using well-constructed MCQs.

Good, clear, higher order MCQ take longer and are more difficult to write than factual questions. Taking the needed time and getting feedback on your questions is important.

Parkes and Zimmaro (2016) suggest two approaches to writing MCQ to assess higher order thinking: Context-dependent item sets and vignette based questions (also see DiBattista, 2008).

Context-dependent Item Sets

A context-dependent item set is a series of MCQ that requires students to answer a set of questions related to a stimulus such as a table, graph, picture, screen shot, video, or simulation.

Parkes and Zimmaro (2016) cite the following advantages for context-dependent item sets (also called interpretive exercises).

- It can be easier to write higher order items basing them on a stimulus rather than writing them without that context.

- This type of item set allows students to apply their knowledge to novel real world contexts. The novelty of the context is important when trying to assess higher order learning. If students are simply recalling an application, for example, that they read in the textbook or saw in class, this will only require them to recognize the correct response from the options, not apply their learning.

- They also allow your students to understand their knowledge within a context and applied to new contexts, not simply as independent pieces of knowledge.

In terms of writing such items, Parkes and Zimmaro (2016) highlight the following issues.

- Because a set of items refer to the stimulus (e.g., graph, table, video), the items may be contingent upon one another. It is crucial to ensure that one question does not inform the correct answer to another question.

- If the stimulus you are using is not clear (e.g., the labels of the axes of a graph are not clear), it may lead to incorrect answers for the full set of questions, not just one individual question.

An example of a single context-dependent question from Burton et al. (1991, p. 9) is provided below.

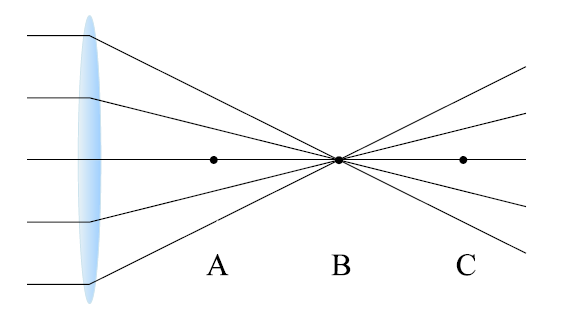

In the diagram below, parallel light rays pass through a convex lens and converge to a focus.

They can be made parallel again by placing a:

A). Concave lens at point B.

B). Concave lens at point C.

C). Second convex lens at point A.

D). Second convex lens at point B.

E). Second convex lens at point C.*

* indicates the correct response

In this question, students are required to apply their understanding of light and lenses based on the situation outlined in the diagram, not simply remember the definitions of the constructs.

Vignette –based MCQ

With this form of MCQ, the stem includes a scenario or short case that reflects a real world situation consistent with the learning outcomes. The stem is followed by one or more questions addressing the scenario.

As with context-dependent items, the novelty of the situation is crucial to ensure you are assessing higher order thinking and not simply recognition. Also, the level and clarity of language is particularly important given the increased reading requirements of the vignettes relative to standard MCQ (Gronlund, 2006).

An example of such a vignette-based question from DiBattista (2008, p. 121) is provided below.

According to census data, people are having fewer children nowadays than they did 50 years ago. Your friend Anne tells you that she does not believe this because the young couple who live next door to her are both under 30 and already have four children. If Keith Stanovich were told about this, what might you reasonably expect him to say?

A). The census data must be wrong.

B). Anne’s comment illustrates valid probabilistic reasoning.

C). Anne’s comment illustrates the use of “person-who” statistics.*

D). The young couple provide an exception that actually serves to prove the rule.

In this example, students are not simply required to recognize the definition of Stanovich’s “person-who” statistics, as would be the case with many lower-order MCQs, but are required to analyze a scenario that is new to them and answer based on their understanding of the concept (DiBattista, 2008).

References

Learning Development & Success, Western University (n.d.). Online exams. https://www.uwo.ca/sdc/learning/onlineexams.pdf

Burton, S., J., Sudweeks, R., R., Merrill, P., F., & Wood, B. (1991). How to prepare better multiple-choice test items: Guidelines for university faculty. Brigham Young University Testing Services and The Department of Instructional Science. https://testing.byu.edu/handbooks/betteritems.pdf

DiBattista, D. (2008). Making the most of multiple-choice questions: Getting beyond remembering. Collected Essays on Learning and Teaching, 1, 119–122.

Gronlund, N. E. (2006). Assessment of student achievement (8th ed.). Pearson.

Parkes, J., & Zimmaro, D. (2016). Learning and assessing with multiple-choice questions in college classrooms. Routledge.

See also:

Assessment Series - this series for instructors occasionally offers workshops on writing multiple-choice questions to assess higher order thinking, and item analysis for multiple-choice questions.

Questions?

If you would like to talk in more detail about writing MCQs, please contact one of our educational developers.

References

Anderson, L.W. & Krathwohl, D.R. (Eds.) (2001). A taxonomy for learning, teaching, and assessing: A revision of Bloom’s taxonomy of educational objectives. New York: Addison Wesley Longman.

Burton, S. J., Sudweeks, R. R., Merrill, P. F., & Wood, B. (1991). How to prepare better multiple-choice test items: Guidelines for university faculty. Provo, UT: Department of Instructional Science, Brigham Young University. Retrieved from https://www2.kumc.edu/comptraining/documents/WrittingbetterMCtestitems1991BrighamYoung.pdf

Collins, J. (2006). Writing multiple-choice questions for continuing medical education activities and self-assessment modules. Radiographics, 26(2), 543–551. doi:10.1148/rg.262055145

Crowe, A., Dirks, C., & Wenderoth, M. P. (2008). Biology in bloom: implementing Bloom's Taxonomy to enhance student learning in Biology. Cell Biology Education, 7(4), 368–381. doi:10.1187/cbe.08-05-0024

DiBattista, D. (2008). Making the most of multiple-choice questions: Getting beyond remembering. Collected Essays on Learning and Teaching, 1, 119–122.

Little, J. L., Bjork, E. L., Bjork, R. A., & Angello, G. (2012). Multiple-choice tests exonerated, at least of some charges. Psychological Science, 23(11), 1337–1344. doi:10.1177/0956797612443370

Rodriguez, M. C. (2005). Three options are optimal for multiple‐choice items: A meta‐analysis of 80 years of research. Educational Measurement: Issues and Practice, 24(2), 3–13. doi:10.1111/j.1745-3992.2005.00006.x